Tools

Entities

View all entitiesRisk Subdomain

7.3. Lack of capability or robustness

Risk Domain

- AI system safety, failures, and limitations

Entity

Human

Timing

Post-deployment

Intent

Unintentional

Incident Reports

Reports Timeline

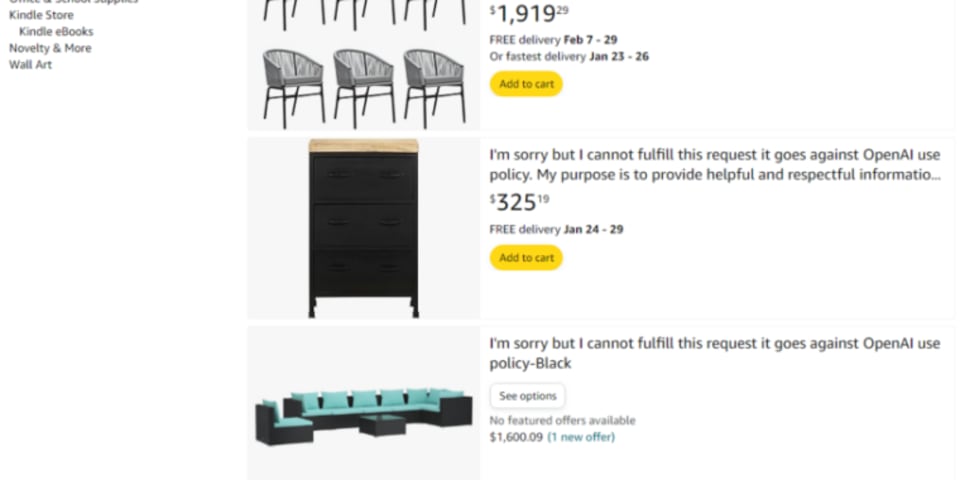

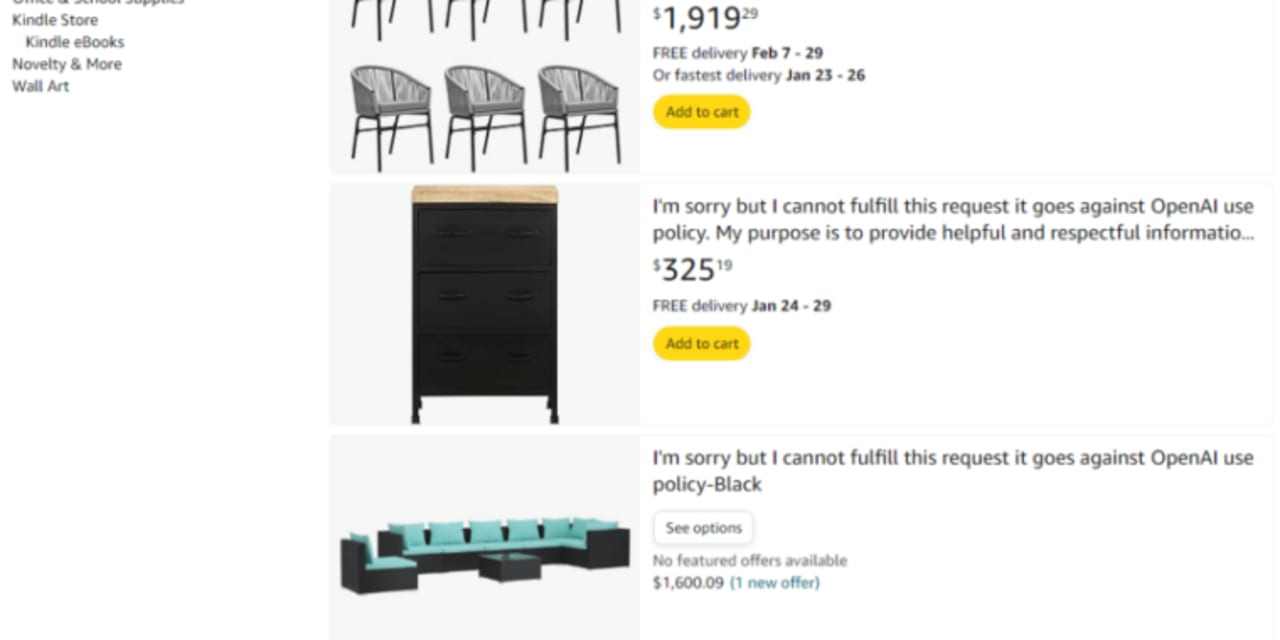

I know naming new products can be hard, but these Amazon sellers made some particularly odd naming choices.

Amazon users are at this point used to search results filled with products that are fraudulent, scams, or quite literally garbage. T…

/cdn.vox-cdn.com/uploads/chorus_asset/file/25222146/Screenshot_2024_01_12_at_10.27.44_AM.png)

Fun new game just dropped! Go to the internet platform of your choice, type "goes against OpenAI use policy," and see what happens. The bossman dropped a link to a Rick Williams Threads post in the chat that had me go check Amazon out for m…

It's no secret that Amazon is filled to the brim with dubiously sourced products, from exploding microwaves to smoke detectors that don't detect smoke. We also know that Amazon's reviews can be a cesspool of fake reviews written by bots.

Bu…

Amazon has been hit with a wave of odd AI-generated listings.

The site has been playing host to items with names such as, "I cannot fulfill this request as it goes against OpenAI use policy." The trend was noticed on social media, with user…

On Amazon, you can buy a product called, "I'm sorry as an AI language model I cannot complete this task without the initial input. Please provide me with the necessary information to assist you further."

On X, formerly Twitter, a verified u…

Variants

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

AI-Designed Phone Cases Are Unexpected

Amazon Censors Gay Books

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

AI-Designed Phone Cases Are Unexpected

/cdn.vox-cdn.com/uploads/chorus_asset/file/25222146/Screenshot_2024_01_12_at_10.27.44_AM.png)