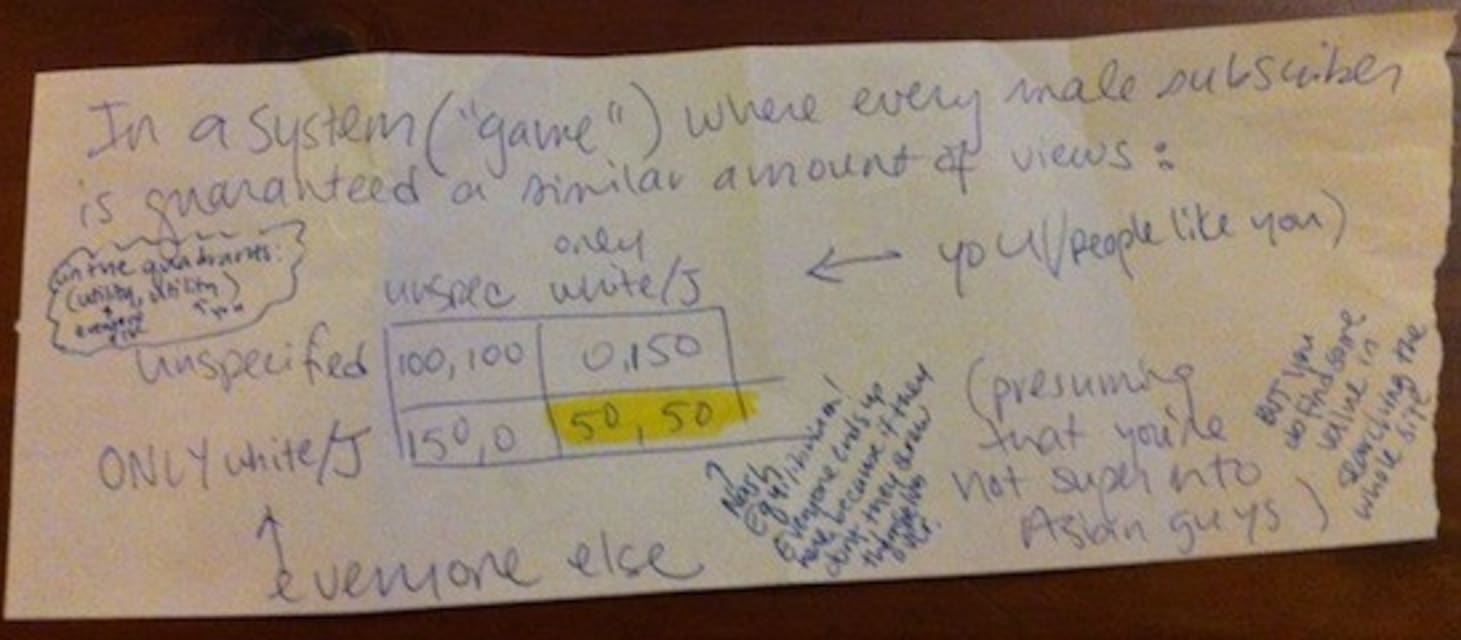

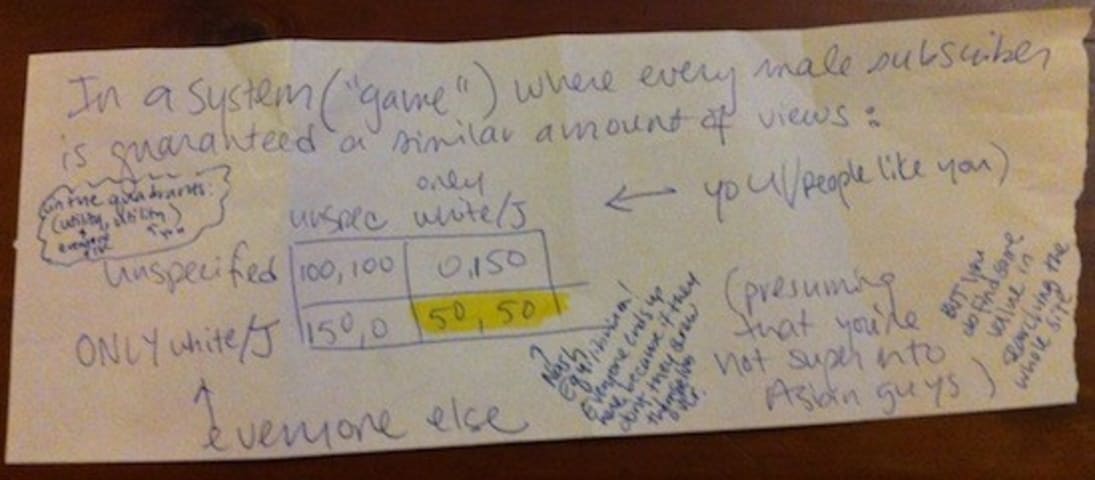

Description: Users selecting “no preference” were shown by Coffee Meets Bagels’s matching algorithm more potential matches with the same ethnicity, which was acknowledged and justified by its founder as a means to maximize connection rate without sufficient user information.

Entities

View all entitiesAlleged: Coffee Meets Bagel developed and deployed an AI system, which harmed Coffee Meets Bagel users having no ethnicity preference and Coffee Meets Bagel users.

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

1.1. Unfair discrimination and misrepresentation

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Discrimination and Toxicity

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

Incident Reports

Reports Timeline

Loading...

I have nothing against Asian guys.

In fact, when my roommate told me the other night that he sometimes sees John Cho (Harold, of Harold and Kumar) at his gym, I squealed. I briefly considered joining the gym, but then I remembered I've Goog…

Loading...

Yet, it seems like a relatively common experience, even if you aren’t from a minority group.

Amanda Chicago Lewis (who now works at BuzzFeed) wrote about her similar experience on Coffee Meets Bagel for LA Weekly : “I've been on the site fo…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents