CSETv1 分類法のクラス

分類法の詳細Incident Number

509

Special Interest Intangible Harm

maybe

Date of Incident Year

2023

Date of Incident Month

03

Date of Incident Day

Estimated Date

No

Risk Subdomain

4.3. Fraud, scams, and targeted manipulation

Risk Domain

- Malicious Actors & Misuse

Entity

Human

Timing

Post-deployment

Intent

Intentional

インシデントレポート

レポートタイムライン

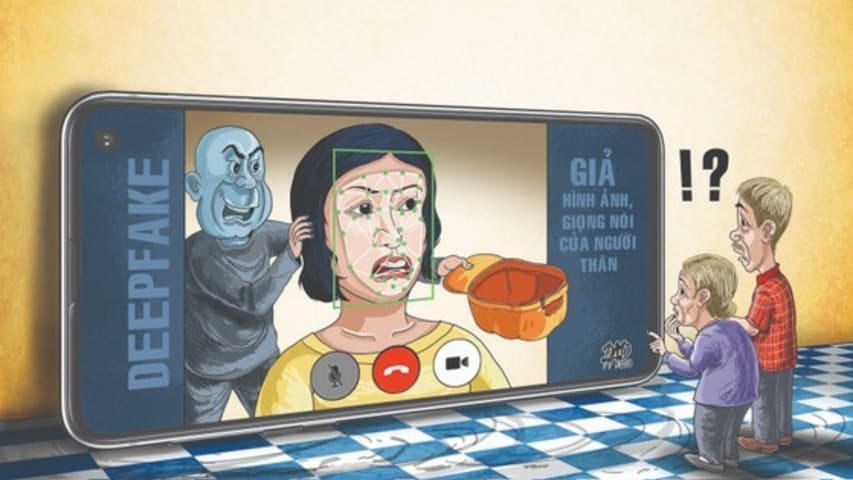

(ĐTTCO) - Sau trò lừa đảo bằng tin nhắn nhờ chuyển tiền, hiện các đối tượng lừa đảo nhanh chóng áp dụng chiêu thức khác tinh vi hơn, thông qua cuộc gọi video giả mạo nhờ công nghệ Deepfake.

Đánh lừa người dùng

Sau gần một tuần bị lừa mất số…

After being detected with tricks of sending messages to appropriate money, scammers are now using an even more sophisticated tool called Deepfake to make fake video calls to victims and ask for money transfer.

Nearly a week after discoverin…

バリアント

よく似たインシデント

Did our AI mess up? Flag the unrelated incidents

よく似たインシデント

Did our AI mess up? Flag the unrelated incidents