Incident 587: Échec apparent de l'étiquetage précis des primates dans les logiciels de reconnaissance d'images en raison d'une prétendue crainte de préjugés raciaux

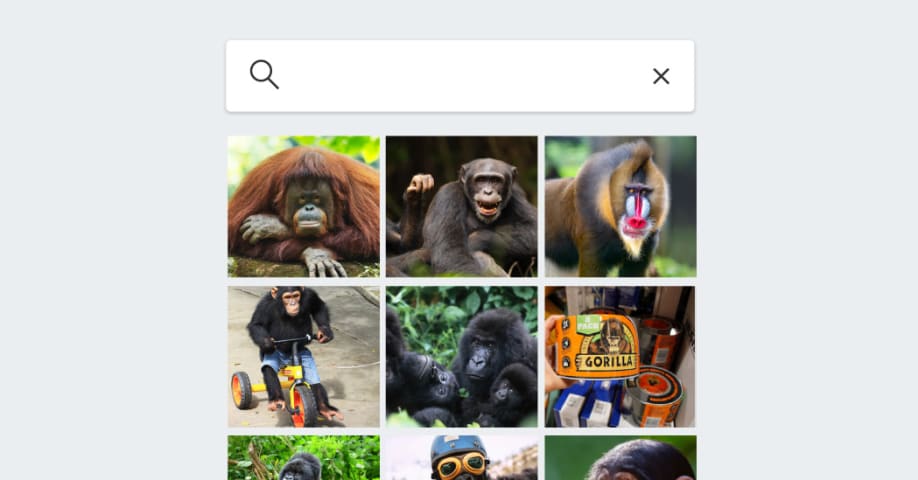

Description: Huit ans après que Google Photos a qualifié à tort de « gorilles » des images d'individus noirs, les logiciels de reconnaissance d'images de Google, Apple, Amazon et Microsoft continuent d'éviter ou de catégoriser les primates de manière erronée. Des tests révèlent que Google et Apple Photos s'abstiennent totalement d'étiqueter les primates, probablement pour éviter de perpétuer les stéréotypes raciaux. Microsoft OneDrive n'identifie aucun animal, tandis qu'Amazon Photos généralise excessivement son étiquetage.

Outils

Nouveau rapportNouvelle RéponseDécouvrirVoir l'historique

Le Moniteur des incidents et risques liés à l'IA de l'OCDE (AIM) collecte et classe automatiquement les incidents et risques liés à l'IA en temps réel à partir de sources d'information réputées dans le monde entier.

Entités

Voir toutes les entitésPrésumé : Un système d'IA développé et mis en œuvre par Google , Apple , Amazon et Microsoft, a endommagé Consumers relying on accurate image categorization et members of racial and ethnic minorities who risk being stereotyped or misrepresented.

Statistiques d'incidents

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

1.1. Unfair discrimination and misrepresentation

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Discrimination and Toxicity

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

Rapports d'incidents

Chronologie du rapport

Loading...

Huit ans après une controverse sur les Noirs étiquetés à tort comme des gorilles par les logiciels d’analyse d’images – et malgré les grands progrès de la vision par ordinateur – les géants de la technologie craignent toujours de répéter l’…

Variantes

Une "Variante" est un incident de l'IA similaire à un cas connu—il a les mêmes causes, les mêmes dommages et le même système intelligent. Plutôt que de l'énumérer séparément, nous l'incluons sous le premier incident signalé. Contrairement aux autres incidents, les variantes n'ont pas besoin d'avoir été signalées en dehors de la base de données des incidents. En savoir plus sur le document de recherche.

Vous avez vu quelque chose de similaire ?

Incidents similaires

Did our AI mess up? Flag the unrelated incidents

Loading...

Wikipedia Vandalism Prevention Bot Loop

· 6 rapports

Loading...

Predictive Policing Biases of PredPol

· 17 rapports

Loading...

Northpointe Risk Models

· 15 rapports

Incidents similaires

Did our AI mess up? Flag the unrelated incidents

Loading...

Wikipedia Vandalism Prevention Bot Loop

· 6 rapports

Loading...

Predictive Policing Biases of PredPol

· 17 rapports

Loading...

Northpointe Risk Models

· 15 rapports