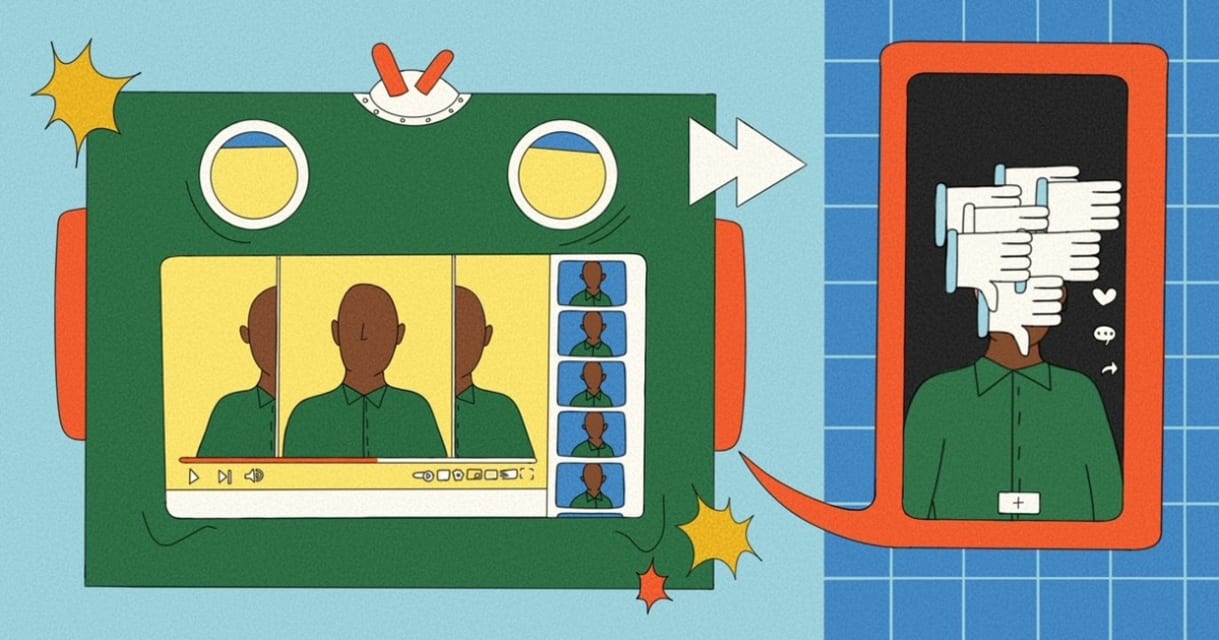

Incidente 635: Noticias falsas generadas por IA atacan a celebridades negras en YouTube

Descripción: YouTube se enfrentó a una oleada de noticias falsas generadas por IA dirigidas a celebridades negras, incluyendo narrativas falsas sobre Sean "Diddy" Combs y otros. Estos videos, que combinaban contenido generado por IA y manipulado, acumularon millones de visualizaciones, desafiando los esfuerzos de moderación de contenido y poniendo de manifiesto la propagación de la desinformación.

Entidades

Ver todas las entidadesAlleged: Unknown generative AI tools creators y Unknown AI text-to-speech technology developers developed an AI system deployed by Variety of YouTube content creators, which harmed Steve Harvey , Sean “Diddy” Combs , General public , Denzel Washington , Black celebrities y Bishop T.D. Jakes.

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

3.1. False or misleading information

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Misinformation

Entity

Which, if any, entity is presented as the main cause of the risk

Human

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Intentional

Informes del Incidente

Cronología de Informes

Loading...

YouTube videos using a mix of artificial intelligence-generated and manipulated media to create fake content have flooded the platform with salacious disinformation about dozens of Black celebrities, including rapper and record executive Se…

Variantes

Una "Variante" es un incidente de IA similar a un caso conocido—tiene los mismos causantes, daños y sistema de IA. En lugar de enumerarlo por separado, lo agrupamos bajo el primer incidente informado. A diferencia de otros incidentes, las variantes no necesitan haber sido informadas fuera de la AIID. Obtenga más información del trabajo de investigación.

¿Has visto algo similar?

Incidentes Similares

Selected by our editors

Did our AI mess up? Flag the unrelated incidents

Incidentes Similares

Selected by our editors

Did our AI mess up? Flag the unrelated incidents