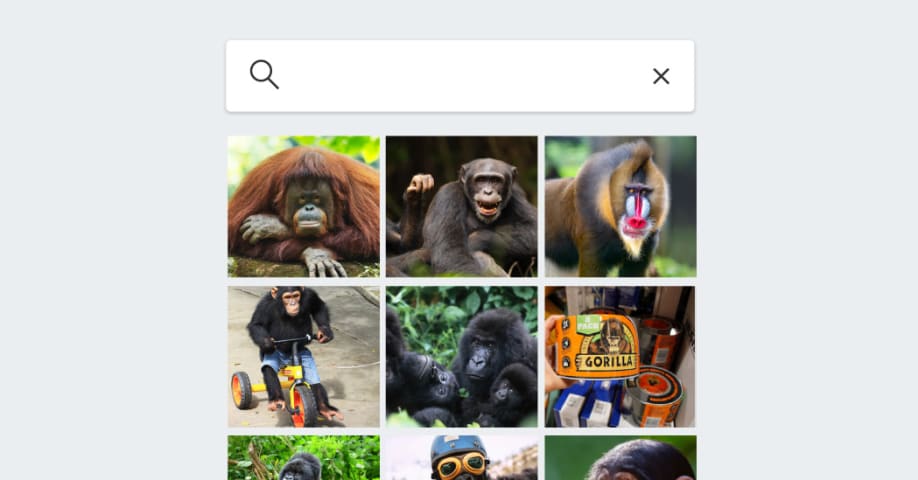

Incidente 587: Aparente fracaso en el etiquetado preciso de primates en software de reconocimiento de imágenes debido a un supuesto temor al sesgo racial

Descripción: Ocho años después de que Google Fotos etiquetara erróneamente imágenes de personas negras como "gorilas", el software de reconocimiento de imágenes de Google, Apple, Amazon y Microsoft aún muestra indicios de evitar o categorizar incorrectamente a los primates. Las pruebas revelan que Google y Apple Fotos se abstienen por completo de etiquetar a los primates, posiblemente para evitar el riesgo de perpetuar estereotipos raciales. Microsoft OneDrive no identifica ningún animal, mientras que Amazon Fotos generaliza excesivamente en sus etiquetas.

Herramientas

Nuevo InformeNueva RespuestaDescubrirVer Historial

El Monitor de Incidentes y Riesgos de IA de la OCDE (AIM) recopila y clasifica automáticamente incidentes y riesgos relacionados con la IA en tiempo real a partir de fuentes de noticias reputadas en todo el mundo.

Entidades

Ver todas las entidadesPresunto: un sistema de IA desarrollado e implementado por Google , Apple , Amazon y Microsoft, perjudicó a Consumers relying on accurate image categorization y members of racial and ethnic minorities who risk being stereotyped or misrepresented.

Estadísticas de incidentes

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

1.1. Unfair discrimination and misrepresentation

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Discrimination and Toxicity

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

Informes del Incidente

Cronología de Informes

Loading...

Ocho años después de una controversia sobre la etiqueta errónea de personas negras como gorilas mediante software de análisis de imágenes, y a pesar de los grandes avances en la visión por computadora, los gigantes tecnológicos todavía teme…

Variantes

Una "Variante" es un incidente de IA similar a un caso conocido—tiene los mismos causantes, daños y sistema de IA. En lugar de enumerarlo por separado, lo agrupamos bajo el primer incidente informado. A diferencia de otros incidentes, las variantes no necesitan haber sido informadas fuera de la AIID. Obtenga más información del trabajo de investigación.

¿Has visto algo similar?

Incidentes Similares

Did our AI mess up? Flag the unrelated incidents

Loading...

Wikipedia Vandalism Prevention Bot Loop

· 6 informes

Loading...

Predictive Policing Biases of PredPol

· 17 informes

Loading...

Northpointe Risk Models

· 15 informes

Incidentes Similares

Did our AI mess up? Flag the unrelated incidents

Loading...

Wikipedia Vandalism Prevention Bot Loop

· 6 informes

Loading...

Predictive Policing Biases of PredPol

· 17 informes

Loading...

Northpointe Risk Models

· 15 informes