Incidente 24: Un robot mata a un trabajador en una planta alemana de Volkswagen

Entidades

Ver todas las entidadesClasificaciones de la Taxonomía CSETv0

Detalles de la TaxonomíaPublic Sector Deployment

No

Infrastructure Sectors

Critical manufacturing

Lives Lost

Yes

Intent

Accident

Near Miss

Harm caused

Ending Date

2015-06-24T07:00:00.000Z

Clasificaciones de la Taxonomía CSETv1

Detalles de la TaxonomíaIncident Number

24

AI Tangible Harm Level Notes

Robot crushed worker at Volkswagen factory. However, no AI linked to the robot. Volkswagen determine this death was due to human error.

Special Interest Intangible Harm

no

Date of Incident Year

2015

Date of Incident Month

06

Date of Incident Day

29

Risk Subdomain

7.3. Lack of capability or robustness

Risk Domain

- AI system safety, failures, and limitations

Entity

Human

Timing

Post-deployment

Intent

Unintentional

Informes del Incidente

Cronología de Informes

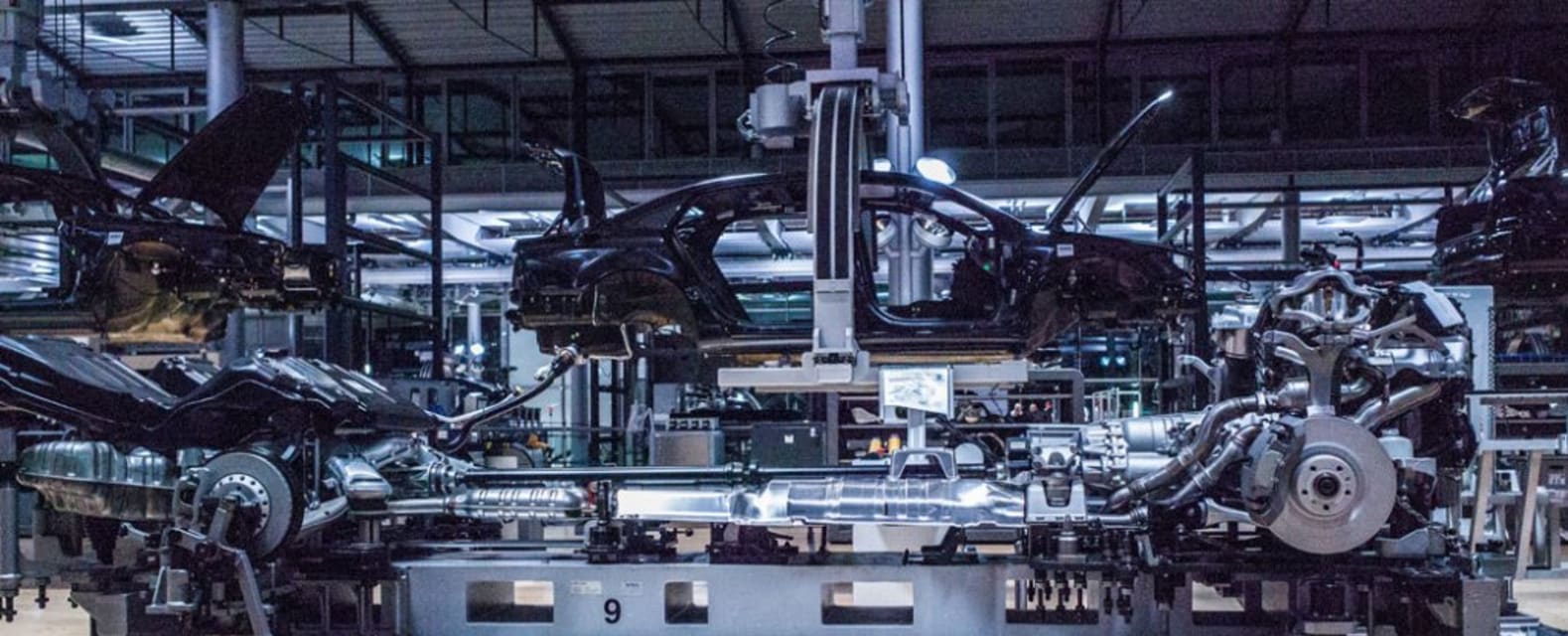

Un técnico murió a causa de las lesiones infligidas por un robot en una planta de Volkswagen (vlkpy) cerca de Kassel, Alemania.

El técnico estaba en el proceso de instalar el robot con un colega cuando lo golpeó en el pecho y lo presionó co…

Un trabajador de una fábrica de Volkswagen en Alemania ha muerto, después de que un robot lo agarrara y lo aplastara contra una placa de metal.

El hombre de 22 años murió en el hospital después del accidente en una planta en Baunatal, 100 k…

El incidente ocurrió el lunes en una fábrica de Volkswagen en Baunatal, Hesse, cuando el joven de 21 años de otra empresa trabajaba en el montaje del robot para una nueva línea de producción de motores eléctricos.

Cuando el robot se puso en…

ChinaFotoPress/Getty Un robot mató a un trabajador en una planta de Volkswagen en Alemania, informa FT. El trabajador de 21 años estaba instalando el robot cuando lo golpeó en el pecho, aplastándolo contra una placa. Murió después del incid…

Un robot mató a un contratista en una de las plantas de producción de Volkswagen en Alemania, dijo el fabricante de automóviles.

El joven de 22 años formaba parte de un equipo que estaba instalando el robot estacionario cuando lo agarró y l…

Un trabajador de 22 años en una planta de ensamblaje de Volkswagen en Alemania murió el lunes cuando un robot lo mató.

El hombre estaba dentro de una jaula de metal instalando el robot estacionario cuando extendió la mano y lo agarró, aplas…

Historia destaca Fiscalía estatal confirma muerte

Los medios locales informan que el robot agarró al trabajador que lo estaba instalando.

(CNN) — Un robot de una línea de ensamblaje de automóviles mató a un trabajador en una fábrica de auto…

Un joven de 22 años fue asesinado ayer por un robot en una planta de Volkswagen en Baunatal, Alemania.

Los servicios de cable informan que el robot aplastó al hombre contra una placa de metal.

El FT dice que VW ha declarado que el robot no …

El fabricante de automóviles Volkswagen dice que un robot mató a un contratista en una de sus plantas de producción en Alemania.

Un portavoz de VW dice que el hombre murió el lunes en la planta de Baunatal, a unos 100 kilómetros (62 millas)…

Correos electrónicos de noticias de última hora Reciba alertas de noticias de última hora e informes especiales. Las noticias e historias que importan, entregadas las mañanas de lunes a viernes.

2 de julio de 2015, 2:43 a. m. GMT / Actualiz…

Un trabajador de una fábrica de Volkswagen en Alemania ha muerto, después de que un robot lo agarrara y lo aplastara contra una placa de metal.

El hombre de 22 años murió en el hospital luego del trágico incidente en una planta en Baunatal …

El trabajador de 22 años murió a causa de las heridas que sufrió cuando quedó atrapado por un brazo robótico y fue aplastado contra una placa de metal.

El hombre, cuyo nombre no ha sido identificado, formaba parte de un equipo que estaba mo…

Los robots de soldadura trabajan en una línea de ensamblaje de Golf V en la planta de Volkswagen en Wolfsburg, en el norte de Alemania, en esta imagen de archivo de 2004. (Foto: FABIAN BIMMER, AP)

Un trabajador de Volkswagen fue asesinado p…

Un trabajador alemán de 22 años ha muerto tras un accidente con un robot en una planta de producción de Volkswagen en la localidad de Baunatal, a unos 100 km al norte de Frankfurt.

Según Heiko Hillwig, portavoz de la empresa, el contratista…

El contratista estaba instalando el robot estacionario cuando lo agarró y lo aplastó contra una placa de metal en la planta de Baunatal.

Este artículo tiene más de 3 años.

Este artículo tiene más de 3 años.

Un robot mató a un contratista en…

Shutterstock

Un contratista de una planta de producción de Volkswagen en Alemania fue asesinado por un robot que lo agarró y lo aplastó contra una placa de metal.

El hombre de 22 años era miembro de un equipo que tenía la tarea de configura…

Los premios Computing Digital Technology Leaders Awards existen para reconocer los logros de las personas y empresas que realmente están logrando que suceda en la parte central de la pila de tecnología digital: desde el diseño y la codifica…

Un robot rebelde ha matado a un técnico de una fábrica de automóviles en un accidente con tintes escalofriantes de película de ciencia ficción.

El hombre de 22 años fue levantado y aplastado por un brazo automatizado mientras trabajaba en l…

Un robot aplastó a un trabajador en una planta de producción de Volkswagen en Alemania, dijo la compañía el miércoles.

Un hombre de 22 años estaba ayudando a armar el robot estacionario que agarra y configura autopartes el lunes cuando la m…

Un técnico murió a manos de un robot en un accidente en una planta de Volkswagen cerca de Kassel, Alemania, informa el Financial Times.

Según el informe, “un contratista externo de 21 años estaba instalando el robot junto con un colega cuan…

Un hombre de 22 años estaba ayudando a armar el robot estacionario que agarra y configura autopartes el lunes cuando la máquina lo agarró y lo empujó contra una placa de metal, informó Associated Press. Más tarde murió a causa de las herida…

Un contratista de 22 años murió en un factor de Volkswagen en Alemania después de que un robot estacionario que estaba ayudando a configurar lo agarró y lo aplastó contra una placa de metal.

El portavoz de VW, Heiko Hillwig, confirmó que el…

Un trabajador subcontratado de 22 años murió la semana pasada después de ser aplastado por un robot estacionario en la planta Baunatal de Volkswagen al norte de Frankfurt.

Los informes de los medios sobre el accidente dijeron que el hombre …

Un robot ha aplastado a un hombre hasta la muerte en una planta de producción de Volkswagen en Alemania. La compañía confirmó la muerte el miércoles.

El trabajador alemán de 22 años era un técnico que instalaba un robot estacionario, que es…

Si creció en los EE. UU., probablemente haya visto al menos un episodio de Los Supersónicos, una caricatura de la década de 1960 que representa una sociedad futurista del siglo XXI con comidas rápidas, ciudades flotantes y un robot llamado …

El espectro que acecha a Europa, y al resto del mundo, ya no es el comunismo, como escribieron Karl Marx y Friedrich Engels en su famoso manifiesto de 1848. Es algo mucho más insidioso, y algo que Marx y Engels difícilmente podrían haber im…

Foto cortesía de HBO

En 2015, un robot estacionario agarró y aplastó a un trabajador de una fábrica de Volkswagen en Alemania. En 2016, un hombre de Ohio murió cuando el Tesla autónomo en el que viajaba se estrelló contra un camión con remo…

Variantes

Incidentes Similares

Did our AI mess up? Flag the unrelated incidents

Incidentes Similares

Did our AI mess up? Flag the unrelated incidents