Entities

View all entitiesCSETv0 Taxonomy Classifications

Taxonomy DetailsProblem Nature

Specification

Physical System

Software only

Level of Autonomy

Low

Nature of End User

Expert

Public Sector Deployment

No

Data Inputs

names, age, location, position, job, COVID-19 tests

CSETv1 Taxonomy Classifications

Taxonomy DetailsIncident Number

91

Risk Subdomain

1.1. Unfair discrimination and misrepresentation

Risk Domain

- Discrimination and Toxicity

Entity

AI

Timing

Post-deployment

Intent

Unintentional

Incident Reports

Reports Timeline

Update, Dec. 18, 2020: This story has been updated to add comments from Stanford Medicine.

Stanford Medicine residents who work in close contact with COVID-19 patients were left out of the first wave of staff members for the new Pfizer vacc…

Stanford Health Care apologized Friday for a plan that left nearly all of its young front-line doctors out of the first round of coronavirus vaccinations. The Palo Alto, Calif., medical center promised an immediate fix that would move the p…

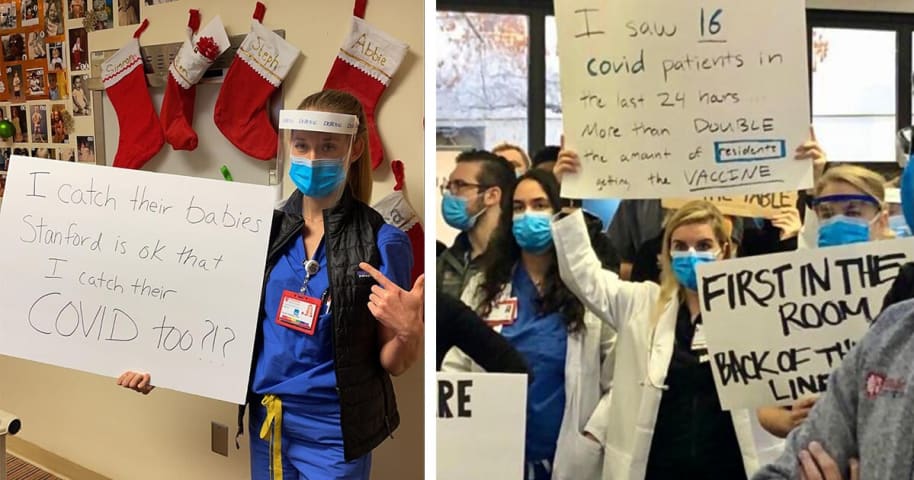

Medical residents and nurses from Stanford Medical Center held a protest on Friday following the hospital choosing to vaccinate some staff members who don’t interact with coronavirus patients over other frontline workers.

Video footage from…

An algorithm determining which Stanford Medicine employees would receive its 5,000 initial doses of the COVID-19 vaccine included just seven medical residents / fellows on the list, according to a December 17th letter sent from Stanford Med…

When resident physicians at Stanford Medical Center—many of whom work on the front lines of the covid-19 pandemic—found out that only seven out of over 1,300 of them had been prioritized for the first 5,000 doses of the covid vaccine, they …

Variants

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents