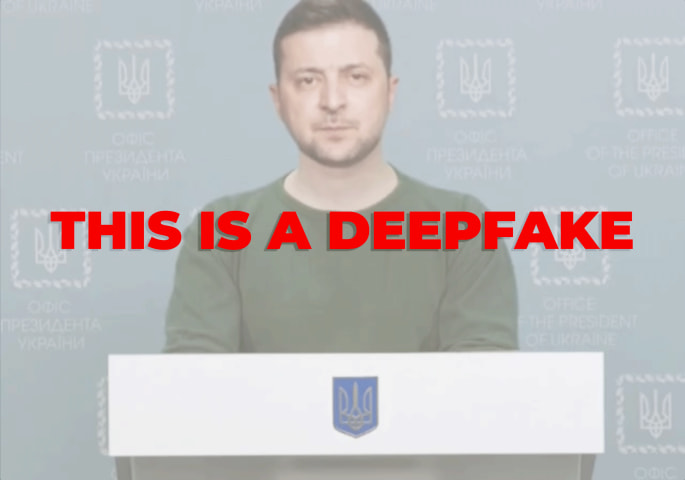

Description: A deepfake video of State Department spokesman Matthew Miller falsely suggested Belgorod was a legitimate target for Ukrainian strikes. This disinformation spread on Telegram and Russian media, misleading the public and inciting tensions. U.S. officials condemned the deepfake. This incident is an example of the threat of AI-powered disinformation and hybrid attacks.

Tools

New ReportNew ResponseDiscoverView History

The OECD AI Incidents and Hazards Monitor (AIM) automatically collects and classifies AI-related incidents and hazards in real time from reputable news sources worldwide.

Entities

View all entitiesAlleged: Unknown deepfake creators developed an AI system deployed by Russian government, which harmed Matthew Miller , Department of State and Biden administration.

Incident Stats

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

4.1. Disinformation, surveillance, and influence at scale

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Malicious Actors & Misuse

Entity

Which, if any, entity is presented as the main cause of the risk

Human

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Intentional

Incident Reports

Reports Timeline

Loading...

A day after U.S. officials said Ukraine could use American weapons in limited strikes inside Russia, a deepfake video of a U.S. spokesman discussing the policy appeared online.

The fabricated video, which is drawn from actual footage, shows…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?