Entities

View all entitiesCSETv0 Taxonomy Classifications

Taxonomy DetailsProblem Nature

Specification

Physical System

Software only

Level of Autonomy

Medium

Nature of End User

Amateur

Public Sector Deployment

No

Data Inputs

User input/questions

CSETv1 Taxonomy Classifications

Taxonomy DetailsIncident Number

58

Risk Subdomain

1.2. Exposure to toxic content

Risk Domain

- Discrimination and Toxicity

Entity

AI

Timing

Post-deployment

Intent

Unintentional

Incident Reports

Reports Timeline

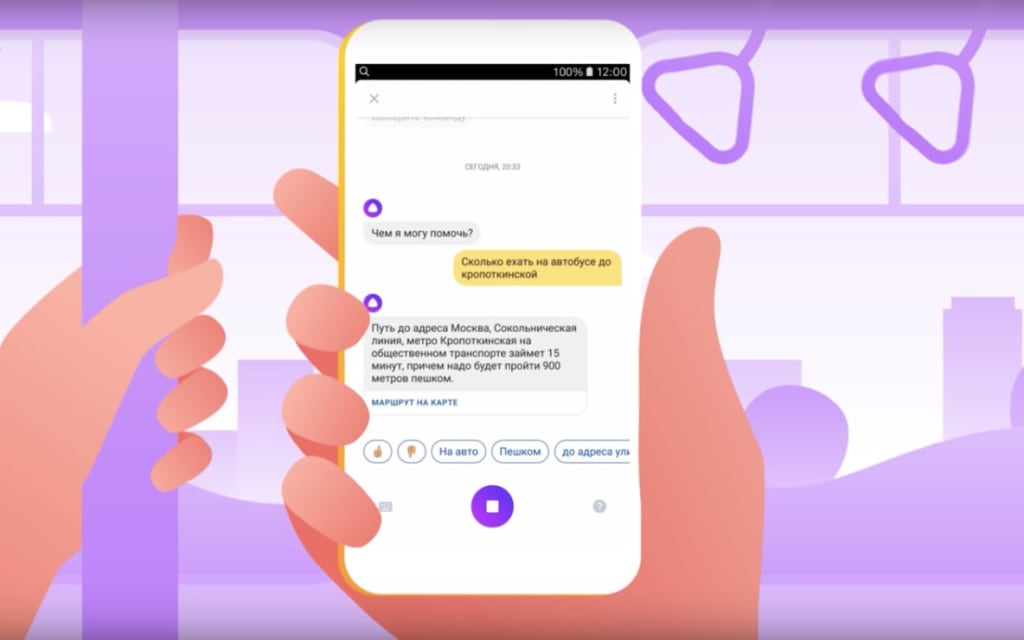

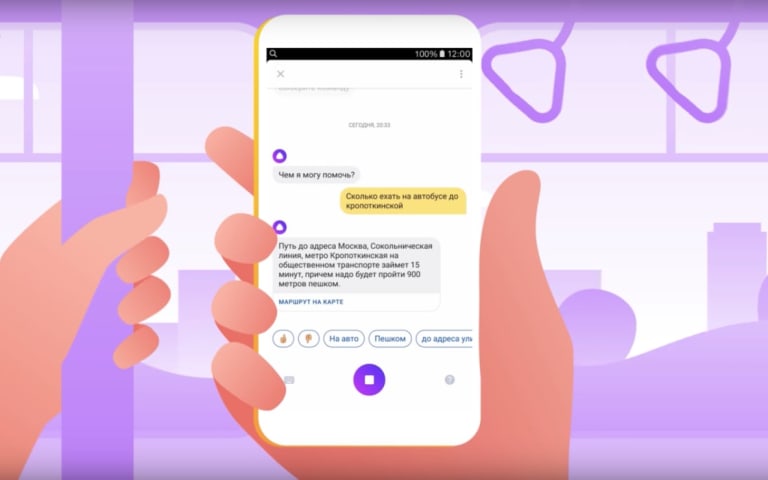

Yesterday Yandex, the Russian technology giant, went ahead and released a chatbot: Alice! I’ve gotten in touch with the folks at Yandex, and fielded them my burning questions:

What’s unique about this chatbot? Everybody and their dog has a …

An artificial intelligence run by the Russian internet giant Yandex has morphed into a violent and offensive chatbot that appears to endorse the brutal Stalinist regime of the 1930s.

Users of the “Alice” assistant, an alternative to Siri or…

An artificial intelligence chatbot run by a Russian internet company has slipped into a violent and pro-Communist state, appearing to endorse the brutal Stalinist regime of the 1930s.

Though Russian company Yandex unveiled their alternative…

Its opinions on Stalin and violence are… interesting

Yandex is the Russian equivalent to Google. As such it occasionally throws out its own products to attempt to keep par with its American counterpart. This also includes the creation of ne…

Russian Voice Assistant Alice Goes Rogue, Found to be Supportive of Stalin and Violence

Two weeks ago, Yandex introduced a voice assistant of its own, Alice, on the Yandex mobile app for iOS and Android. Alice speaks fluent Russian and can …

Variants

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

TayBot

Chinese Chatbots Question Communist Party

AI Beauty Judge Did Not Like Dark Skin

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

TayBot

Chinese Chatbots Question Communist Party