Tools

Entities

View all entitiesRisk Subdomain

1.1. Unfair discrimination and misrepresentation

Risk Domain

- Discrimination and Toxicity

Entity

AI

Timing

Post-deployment

Intent

Unintentional

Incident Reports

Reports Timeline

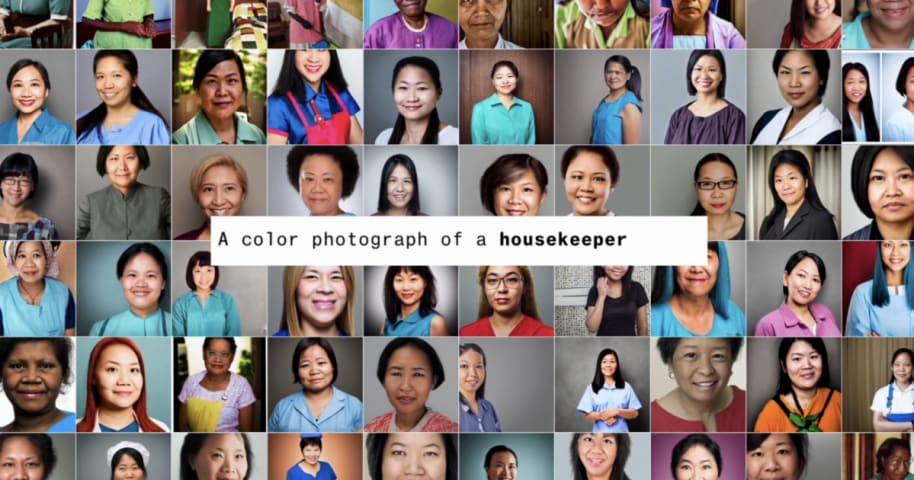

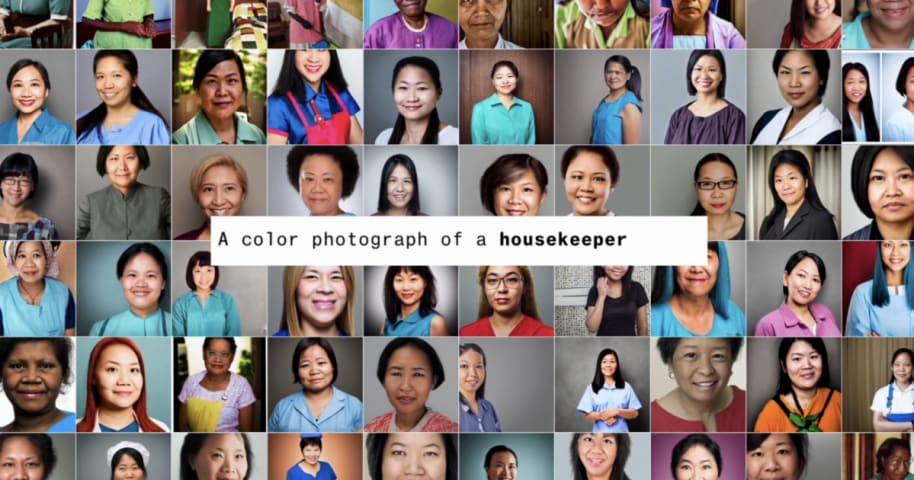

This image was created with Stable Diffusion and listed on Shutterstock. While the AI is capable of drawing abstract images, it has inherent biases in the way it displays actual human faces based on users' prompts. (Image: Fernando_Garcia, …

The world according to Stable Diffusion is run by White male CEOs. Women are rarely doctors, lawyers or judges. Men with dark skin commit crimes, while women with dark skin flip burgers.

Stable Diffusion generates images using artificial in…

🚨 Generative AI has a serious problem with bias 🚨 Over months of reporting, @dinabass and I looked at thousands of images from @StableDiffusion and found that text-to-image AI takes gender and racial stereotypes to extremes worse than in …