Entities

View all entitiesCSETv1 Taxonomy Classifications

Taxonomy DetailsIncident Number

526

Notes (special interest intangible harm)

Annotator 1:

4.2 - there are no established legal standards on whether or not deepfake AI of artist's voices violates copyright laws

Special Interest Intangible Harm

no

Date of Incident Year

2023

Date of Incident Month

04

Date of Incident Day

Risk Subdomain

6.3. Economic and cultural devaluation of human effort

Risk Domain

- Socioeconomic & Environmental Harms

Entity

Human

Timing

Post-deployment

Intent

Intentional

Incident Reports

Reports Timeline

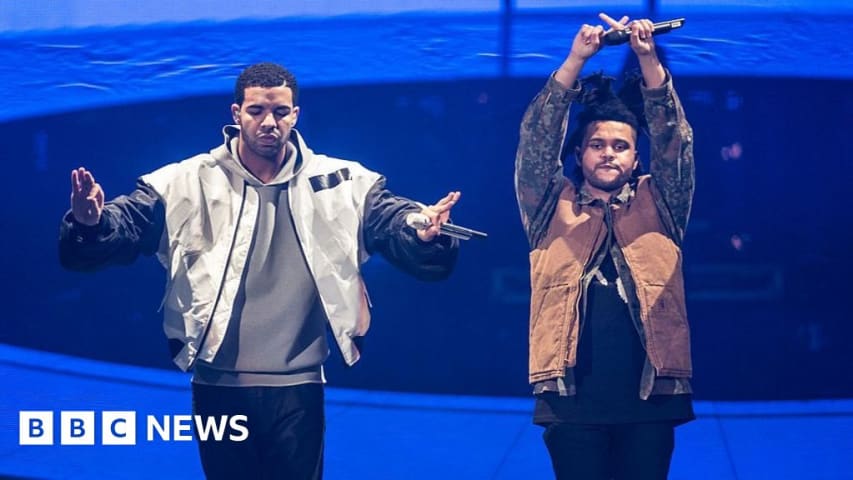

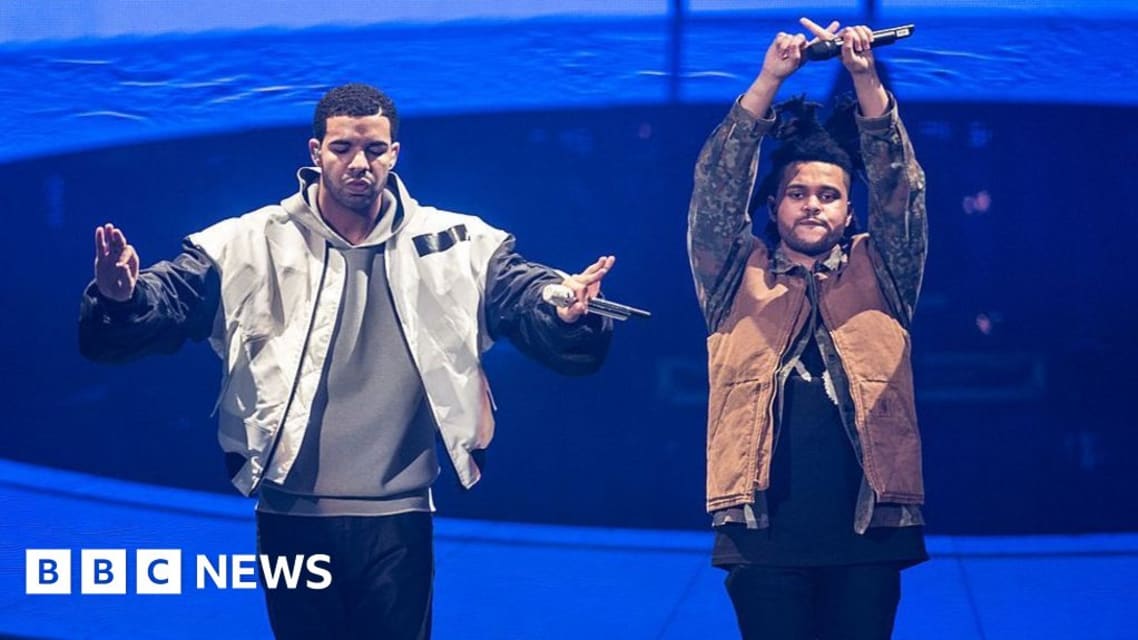

A song that uses artificial intelligence to clone the voices of Drake and The Weeknd is being removed from streaming services.

Heart On My Sleeve is no longer available on Apple Music, Spotify, Deezer and Tidal.

It is also in the process of…

/cdn.vox-cdn.com/assets/1296536/youtube_copyright.png)

The AI Drake track that mysteriously went viral over the weekend is the start of a problem that will upend Google in one way or another — and it’s really not clear which way it will go.

Here’s the basics: there’s a new track called “Heart o…

Variants

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

/cdn.vox-cdn.com/assets/1296536/youtube_copyright.png)