Tools

Entities

View all entitiesIncident Stats

Risk Subdomain

1.1. Unfair discrimination and misrepresentation

Risk Domain

- Discrimination and Toxicity

Entity

AI

Timing

Post-deployment

Intent

Unintentional

Incident Reports

Reports Timeline

Introduction

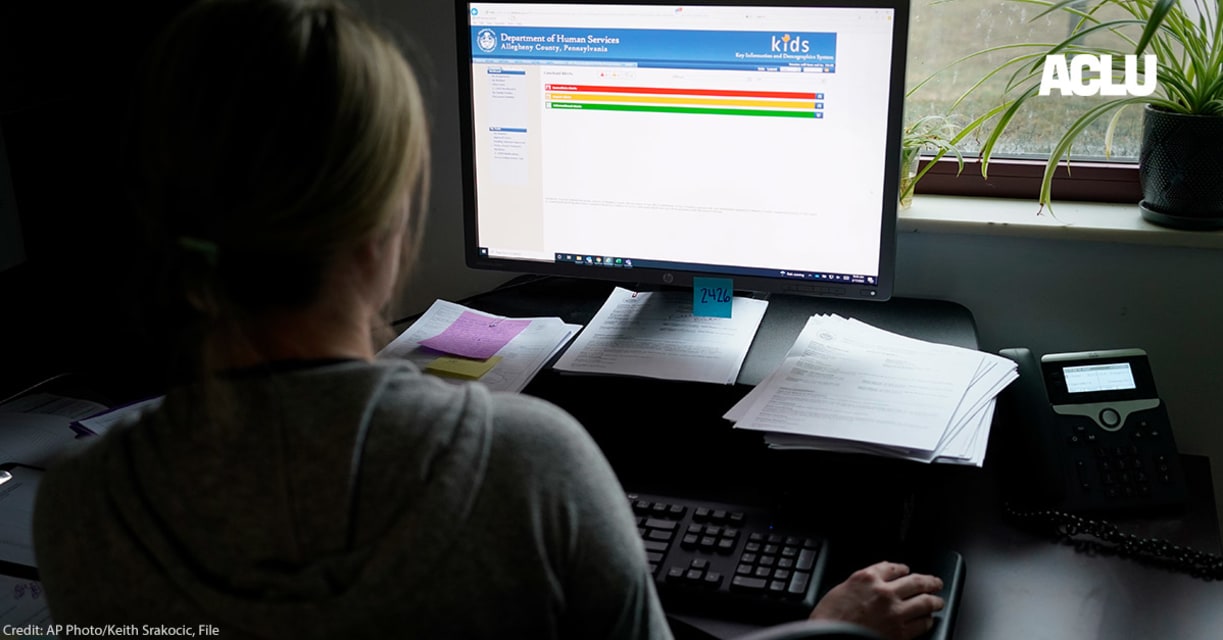

In 2017, the creators of the Allegheny Family Screening Tool (AFST) published a report describing the development process for a predictive tool used to inform responses to calls to Allegheny County, Pennsylvania’s child welfare…

You hear a knock on your door. Expecting a neighbor or perhaps a delivery, you open it, only to find a child welfare worker demanding entry. It doesn’t seem like you can refuse so you let them in and watch as they search every room, rummagi…

PITTSBURGH (AP) — For the two weeks that the Hackneys’ baby girl lay in a Pittsburgh hospital bed weak from dehydration, her parents rarely left her side, sometimes sleeping on the fold-out sofa in the room.

They stayed with their daughter …

Variants

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents