Entities

View all entitiesIncident Stats

Risk Subdomain

7.3. Lack of capability or robustness

Risk Domain

- AI system safety, failures, and limitations

Entity

AI

Timing

Post-deployment

Intent

Unintentional

Incident Reports

Reports Timeline

- Created an article using GPT-3.

- Used Originality AI to detect whether the content was AI or human generated. Score: 100% AI

- Prompted GPT-3 to rewrite the article "to avoid detection by AI content detection tools".

- New Score: 68% Human

Fai…

In response to the growing concern from educators over ChatGPT’s ability to help students cheat, OpenAI released a tool on Tuesday that can detect AI-written text. However, the company said, “Our classifier is not fully reliable.”

“In our …

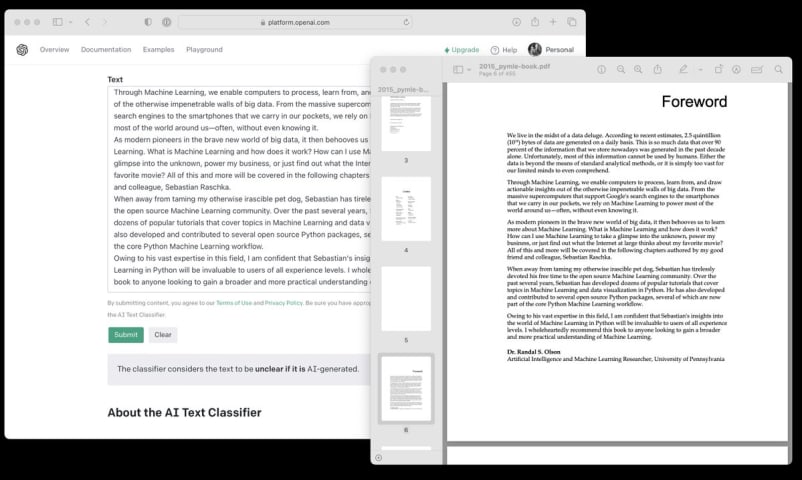

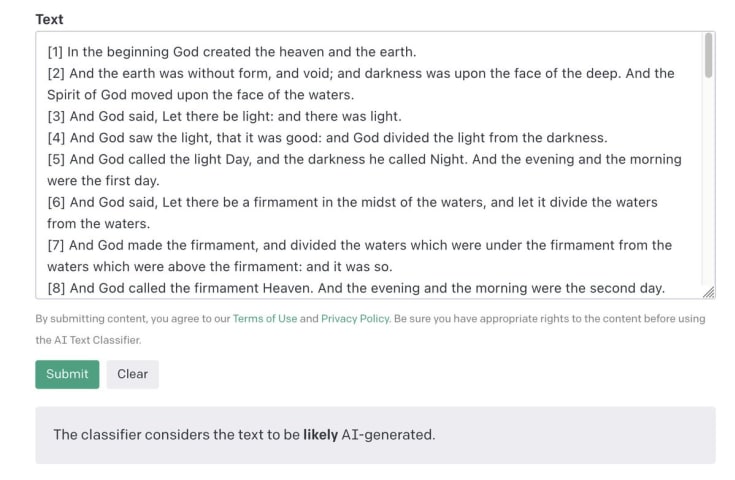

OpenAI just launched the "AI Text Classifier" to identify texts generated by AI. Tried it, and IT DOES NOT WORK. https://platform.openai.com/ai-text-classifier

Using my Python ML book published in 2015:

- @randal_olson's foreword: unclear

- my…

ChatGPT released a new classifier tool yesterday to detect AI-generated text that, within a few hours, proved to be imperfect, at best. It turns out that when it comes to detecting generative AI — whether it is text or images — there may be…

OpenAI is responding to widespread concern about the technology it's put out into the world by releasing an AI text detector that is accurate 26% of the time and yields false positives 9% of the time

welp

it appears the problem of distingui…

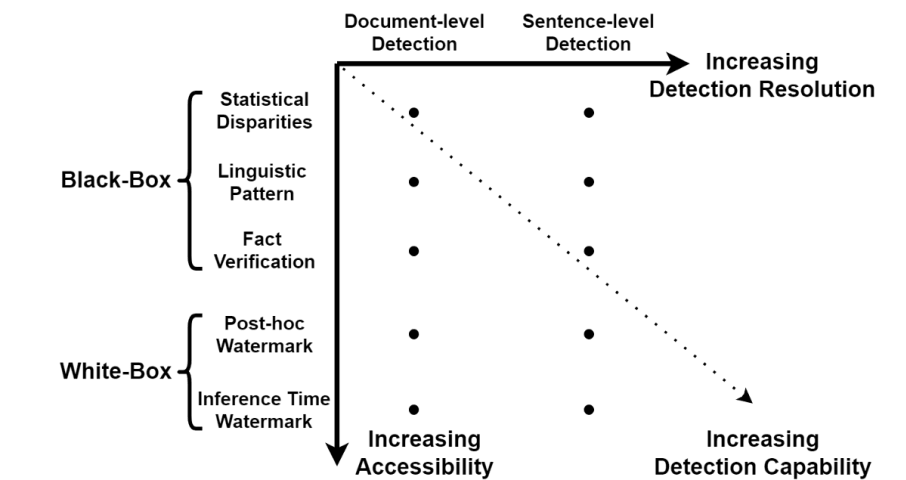

Recent advancements in natural language generation (NLG) technology have significantly improved the diversity, control, and quality of LLM-generated texts. A notable example is OpenAI’s ChatGPT, which demonstrates exceptional performance in…

A month of ChatGPT-fuelled news

This past month was clearly dominated with ChatGPT news, headlined by OpenAI’s announcement of $10b of new investment from Microsoft in return for a 49% stake and plans of extensive product integrations. Open…

Variants

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents