Description: Facebook's, Instagram's, and Twitter's automated content moderation failed to proactively remove racist remarks and posts directing at Black football players after finals loss, allegedly largely relying on user reports of harassment.

Entities

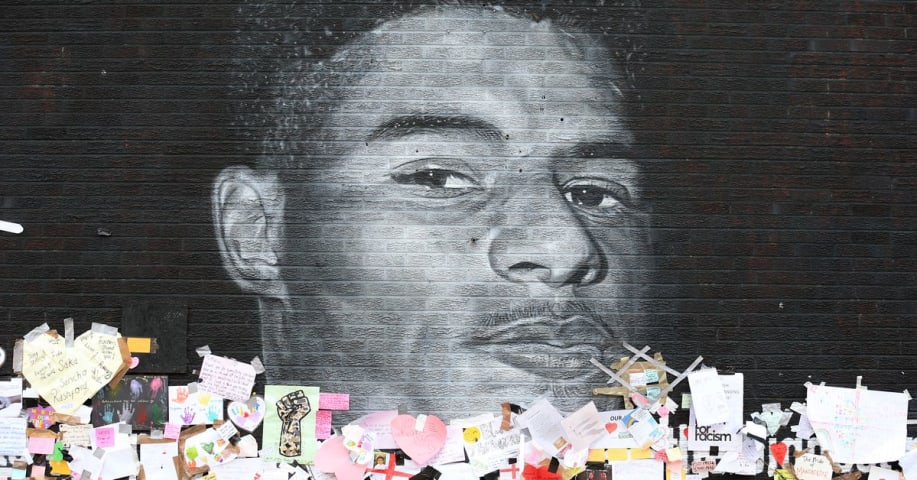

View all entitiesAlleged: Facebook , Instagram and Twitter developed and deployed an AI system, which harmed Marcus Rashford , Jadon Sancho , Bukayo Saka , Facebook users , Instagram users and Twitter Users.

Incident Stats

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

1.2. Exposure to toxic content

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Discrimination and Toxicity

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

Incident Reports

Reports Timeline

Loading...

It wasn’t exactly surprising that hordes of social media trolls viciously attacked three Black players on England’s soccer team with racist comments and emojis after a historic loss on Sunday, July 11. What was unexpected was how quickly ev…

Loading...

After another major incident of racist abuse online, targeted at members of the English football team, Facebook has provided a new overview of how it's working to address such attacks, and stop people from experiencing race-based abuse acro…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents