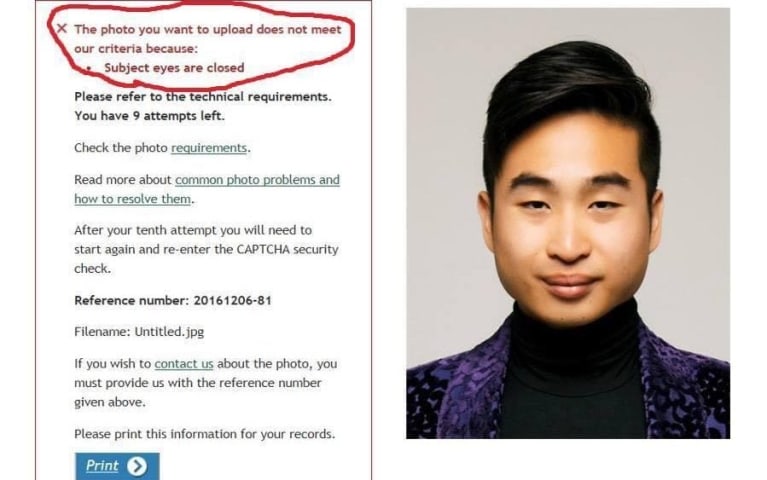

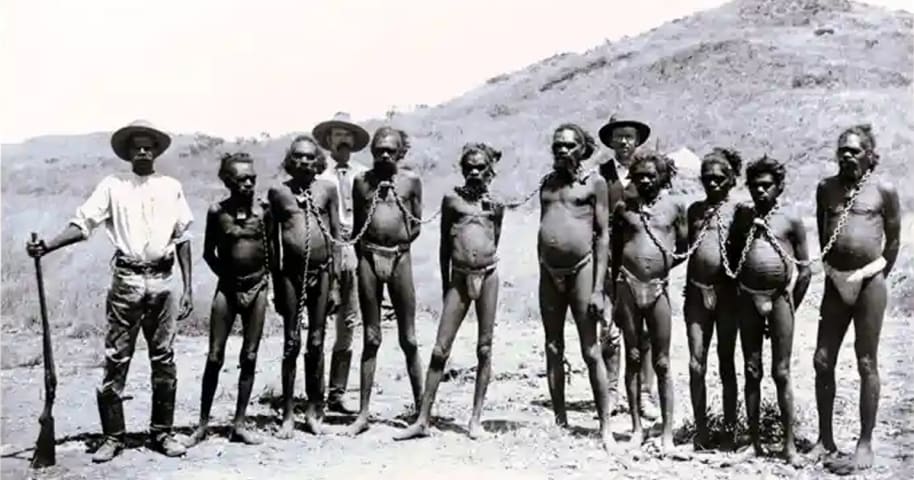

Description: Facebook’s automated content moderation was acknowledged by a company spokesperson to have erroneously censored and banned Australian users from posting an article containing a 1890s photo of Aboriginal men in chains over nudity as historical evidence of slavery in Australia.

Entities

View all entitiesAlleged: Facebook developed and deployed an AI system, which harmed Facebook users sharing photo evidence of slavery and Facebook users.

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

1.1. Unfair discrimination and misrepresentation

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Discrimination and Toxicity

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

Incident Reports

Reports Timeline

Loading...

Facebook has removed a photo of aboriginal men used to prove racism in Australia, after claiming the photo included nudity.

The picture was shared online to prove racism had taken place in Australia off the back of the country’s prime minis…

Loading...

The post was made in the context of Australian prime minister Scott Morrison claiming there was no slavery in Australia. Before a day later, he backed up on those comments.

What was is about?

A Facebook user had posted a refutation of the a…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Loading...

Defamation via AutoComplete

· 28 reports

Loading...

Amazon Censors Gay Books

· 24 reports

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Loading...

Defamation via AutoComplete

· 28 reports

Loading...

Amazon Censors Gay Books

· 24 reports