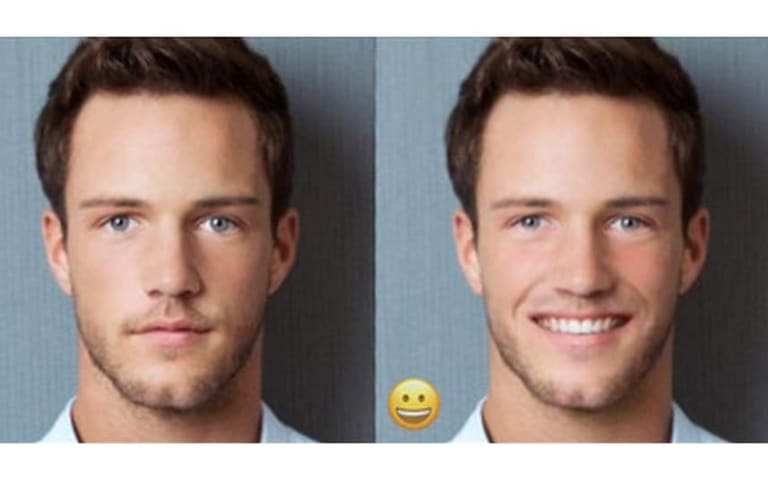

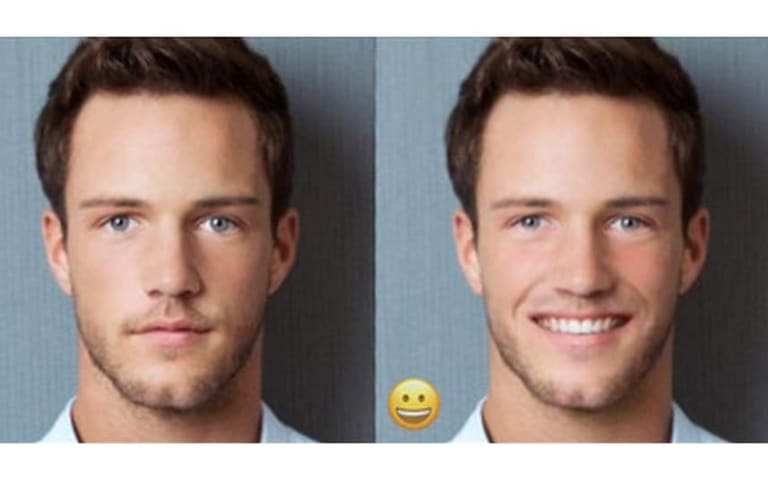

Description: FaceApp’s algorithm was reported by a user to have predicted different genders for two mostly identical facial photos with only a slight difference in eyebrow thickness.

Entities

View all entitiesAlleged: FaceApp developed and deployed an AI system, which harmed FaceApp non-binary presenting users , FaceApp transgender users and FaceApp users.

CSETv1 Taxonomy Classifications

Taxonomy DetailsIncident Number

The number of the incident in the AI Incident Database.

273

Special Interest Intangible Harm

An assessment of whether a special interest intangible harm occurred. This assessment does not consider the context of the intangible harm, if an AI was involved, or if there is characterizable class or subgroup of harmed entities. It is also not assessing if an intangible harm occurred. It is only asking if a special interest intangible harm occurred.

yes

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

1.1. Unfair discrimination and misrepresentation

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Discrimination and Toxicity

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

Incident Reports

Reports Timeline

Loading...

I’d like to talk a little bit about algorithms, dysphoria, and dysmorphia.

I’ve struggled with algorithms. I’ll often take a picture and run it through FaceApp to get gendered.

I recently noticed that when my eyebrows are thin, it says I am…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Loading...

Gender Biases of Google Image Search

· 11 reports

Loading...

AI Beauty Judge Did Not Like Dark Skin

· 9 reports

Loading...

FaceApp Racial Filters

· 23 reports

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Loading...

Gender Biases of Google Image Search

· 11 reports

Loading...

AI Beauty Judge Did Not Like Dark Skin

· 9 reports

Loading...

FaceApp Racial Filters

· 23 reports