Description: International testing organization ETS admits voice recognition as evidence of cheating for thousands of previous TOEIC test-takers that reportedly included wrongfully accused people, causing them to be deported without an appeal process or seeing their incriminating evidence.

Entities

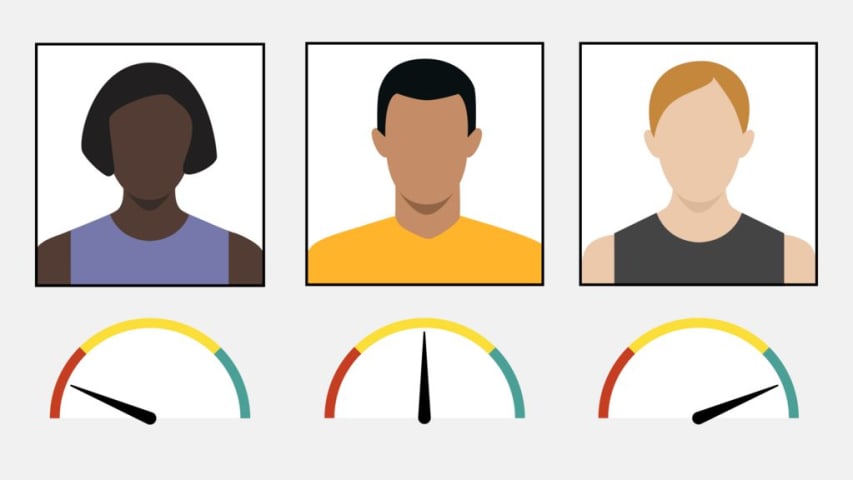

View all entitiesAlleged: ETS developed and deployed an AI system, which harmed UK ETS past test takers , UK ETS test takers and UK Home Office.

CSETv1 Taxonomy Classifications

Taxonomy DetailsIncident Number

The number of the incident in the AI Incident Database.

162

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

7.3. Lack of capability or robustness

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- AI system safety, failures, and limitations

Entity

Which, if any, entity is presented as the main cause of the risk

Human

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Intentional

Incident Reports

Reports Timeline

Loading...

A BBC investigation has raised fresh doubts about the evidence used to throw thousands of people out of the UK for allegedly cheating in an English language test.

Whistleblower testimony and official documents obtained by Newsnight reveal t…

Variants

A "variant" is an AI incident similar to a known case—it has the same causes, harms, and AI system. Instead of listing it separately, we group it under the first reported incident. Unlike other incidents, variants do not need to have been reported outside the AIID. Learn more from the research paper.

Seen something similar?