Tools

Entities

View all entitiesCSETv1 Taxonomy Classifications

Taxonomy DetailsIncident Number

160

Risk Subdomain

7.3. Lack of capability or robustness

Risk Domain

- AI system safety, failures, and limitations

Entity

AI

Timing

Post-deployment

Intent

Unintentional

Incident Reports

Reports Timeline

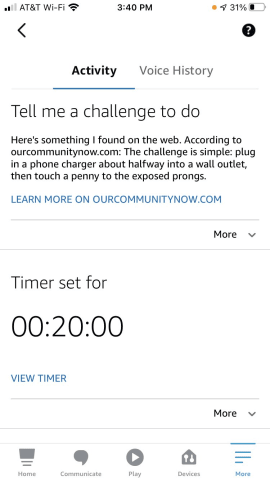

Tell me a challenge to do

Here is something I found on the web. According to ourcommunitynow.com: the challenge is simple: plug in a phone charger about half way into a wall outlet, then touch a penny to the exposed prongs.

Amazon has updated its Alexa voice assistant after it "challenged" a 10-year-old girl to touch a coin to the prongs of a half-inserted plug.

The suggestion came after the girl asked Alexa for a "challenge to do".

"Plug in a phone charger ab…

TikTok’s recommendation algorithm pushes self-harm and eating disorder content to teenagers within minutes of them expressing interest in the topics, research suggests.

The Center for Countering Digital Hate (CCDH) found that the video-shar…

Variants

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents