Entities

View all entitiesCSETv1 Taxonomy Classifications

Taxonomy DetailsIncident Number

125

GMF Taxonomy Classifications

Taxonomy DetailsKnown AI Goal Snippets

(Snippet Text: But a new cache of company records obtained by Reveal from The Center for Investigative Reporting – including internal safety reports and weekly injury numbers from its nationwide network of fulfillment centers – shows that company officials have profoundly misled the public and lawmakers about its record on worker safety., Related Classifications: Robotic Manipulation), (Snippet Text: Not long after, Barrera was written up by a different manager for too much “Time Off Task,” Amazon’s system for tracking employee productivity., Related Classifications: Activity Tracking, Automatic Skill Assessment), (Snippet Text: Minutes later her supervisor materialized to ask why she’d stopped scanning. “I was thinking, how did she know I was not scanning? , Related Classifications: Activity Tracking)

Known AI Goal Classification Discussion

Robotic Manipulation: Robots set up too fast of a pace.

Risk Subdomain

7.3. Lack of capability or robustness

Risk Domain

- AI system safety, failures, and limitations

Entity

AI

Timing

Post-deployment

Intent

Unintentional

Incident Reports

Reports Timeline

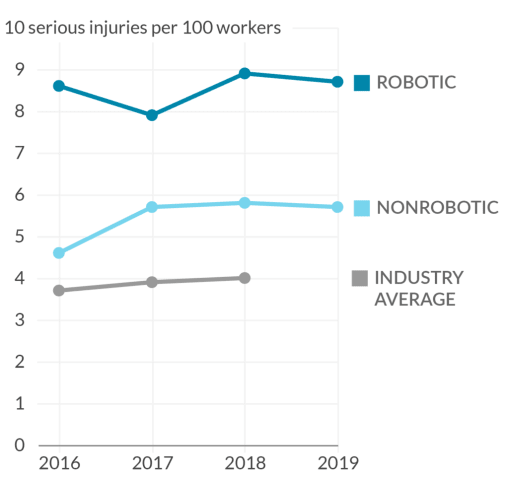

Robots. Prime Day. Holiday peak. Internal records show Amazon has deceived the public on rising injury rates among its warehouse workers.

On Cyber Monday 2014, Amazon operations chief Dave Clark proudly unveiled the company’s new warehouse …

Amazon’s warehouse injury rates have been secret for years despite mounting public concerns over labor practices and lack of worker safety. With access to a trove of new internal records, Reveal from The Center for Investigative Reporting i…

Warehouse workers in California are one step closer to being able to pee in peace. Yesterday, the state Senate voted 26 to 11 to pass AB 701, a bill aimed squarely at Amazon and other warehousing companies that track worker productivity. Th…

Variants

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Similar Incidents

Did our AI mess up? Flag the unrelated incidents